Hi everyone,

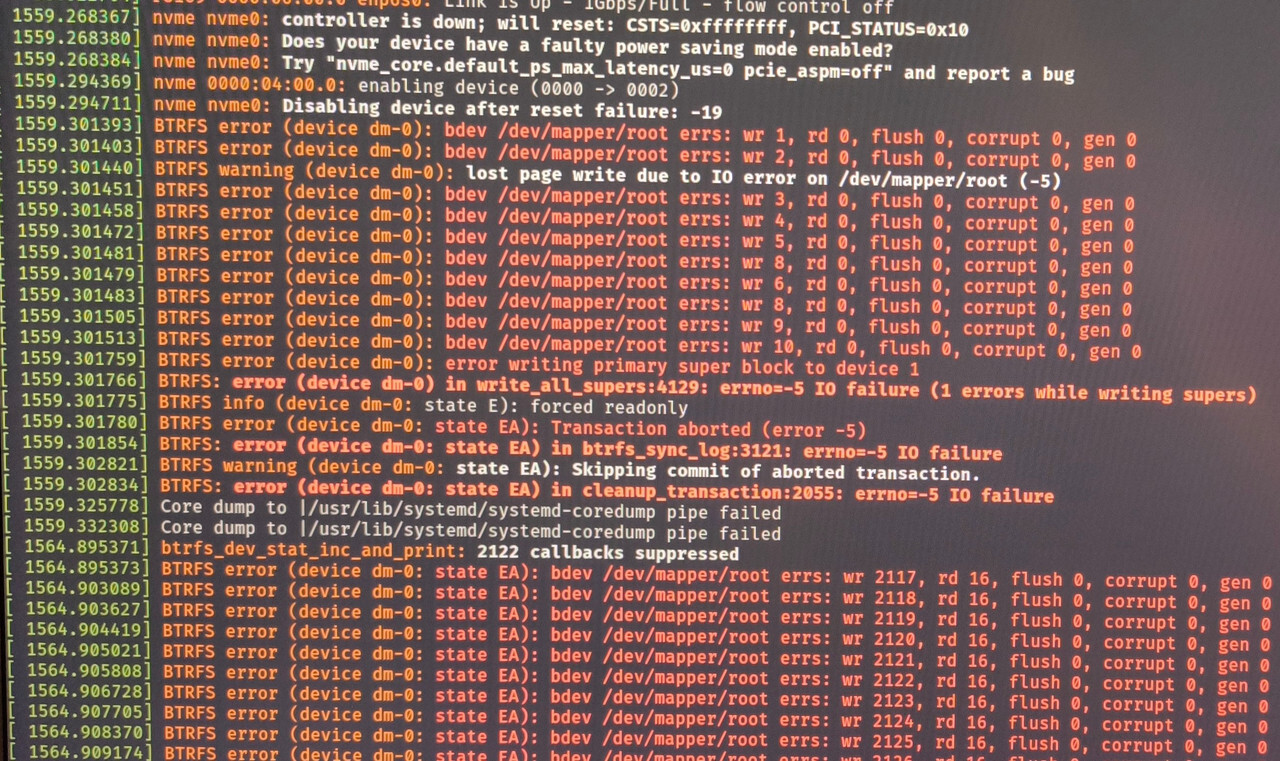

I have been experiencing some weird problems lately, starting with the OS becoming unresponsive "randomly". After reinstalling multiple times (different filesystems, tried XFS and BTRFS, different nvme slots with different nvme drives, same results) I have narrowed it down to heavy IO operations on the nvme drive. Most of the time, I can't even pull up dmesg, and force shutdown, as ZSH gives an Input/Output error no matter the command. A couple of times I was lucky enough for the system to stay somewhat responsive, so that I could pull up dmesg.

It gives a controller is down, resetting message, which I've seen on archwiki for some older Kingston and Samsung nvmes, and gives Kernel parameters to try (didn't help much, they pretty much disable aspm on pcie).

What did help a bit was reverting a recent bios upgrade on my MSI Z490 Tomahawk, causing the system to not crash immediately with heavy I/O, but rather mount as ro, but the issue still persists. I have additionally run memtest86 for 8 passes, no issues there.

I have tried running the lts Kernel, but this didn't help. The strange thing is, this error does not happen on Windows 11.

Has anyone experienced this before, and can give some pointers on what to try next? I'm at my wits end here. EDIT: When this issue first appeared, I assumed the Kioxia drive was defective, which the manufacturer replaced after. This issue still happens with the new replacement drive too, as well as the Samsung drive. I thus assume, that neither drives are defective (smartctl also seems to think so)

Here are hardware and software details:

- Arch with latest Zen Kernel, 6.7.4, happened with other, older kernels too though, tried regular, lts and zen

- BTRFS on LUKS

- i9-10850k

- MSI z490 Tomahawk

- GSkill 3200 MHz RAM, 32GB, DDR4

- Samsung 970 Evo 1TB & Kioxia Exceria G2 1TB (tested both drives, in both slots each, over multiple installs)

- Vega 56 GPU

- Be quiet Straight Power 11 750W PSU

ssds getting not enough power? i'd test it with different PSU, i had a problem with my ssd failing and changing PSU worked, apparently 3.3VDC rail is routed on the motherboards without any conversion straight to m.2/pcie devices

Unfortunately I don't have a spare PSU, but I might try to measure the 3.3 volt rail with a multimeter (don't own an oscilloscope unfortunately) while under load and see what happens

you could also try one of those USB to m.2 and see if that works

Yeah, an oscilloscope would be handy in hunting spikes, it's a bit harder with a standard multimeter, you sure you don't know anyone with a spare PSU to borrow?

Happens with both drives, I have tried each possible permutation (Samsung in slot 1 and 2, kioxia in slot 1 and 2, and even only installing one drive at a time)

Boot a live ISO with the flags recommended in the kernel message and do some tests on the bare drives. That way you won't have the filesystem and subsequently the rest of the system giving out on you while you're debugging.

Boot a live ISO with the flags recommended in the kernel message and do some tests on the bare drives. That way you won’t have the filesystem and subsequently the rest of the system giving out on you while you’re debugging.

Which tests are you referring to exactly? I have read about badblocks for example, and it not being much use for ssds in general, due to their automatic bad-block-remapping, so they remain invisible to the OS as all remapping happens in the drive's controller. Smart values look great for both drives, about 20TBW on the Samsung drive, and a lot less on the Kioxia drive.

I'd start by generating some synthetic workloads such as writing some sequential data to it and then reading it back a few times.

badblocksconcerns partial failure of the device where (usually) just a few blocks misbehave while the rest remains accessible. The failure mode seen here is that the entire drive becomes inaccessible and it's likely not due to the drive itself but how it's connected.If synthetic loads fail to reproduce the error, I'd put a filesystem on it and copy over some real data perhaps. Put on some load that mimics a real system somehow to try and get it to fail without the OS actually being ran off the drive.

Thanks, I'll try that. I loaded the drive using dd a couple of times, and that did bring the system down a couple of times. I was writing to the filesystem though, while the system was booted

Did you boot with the kernel flags from the log?

Could you show the dmesg from the point onwards when the drive dropped out?

I did, yes, but no avail. The dmesg output I posted is after the drive was mounted as ro, and is the best i could get. After some time, the system stops responding completely

Your system stops responding even if it's not booted from those drives but a live ISO?

I had a similar issue years ago in around 2018 with a Samsung nvme SSD that came stock from Dell with an XPS, me and another guy from a thread on Reddit ended up emailing with a guy from canonical and dell. The troubleshooting email chain went on for almost a year after which I just switched to a wd black and haven't had the issue since (still using the same wd black to this day)

Anyhow, they ended up moving the discussion to launchpad, maybe perusing there might help you troubleshoot: https://bugs.launchpad.net/ubuntu/+source/linux/+bug/1746340

Have you tried booting from a live image? I’d try downloading something with a live option like Ubuntu to a flash drive, and then trying to mount the drive from that. Anecdotally I had massive issues with Manjaro a while back where it would “lose” access to entire usb bays on the motherboard that didn’t happen in Debian etc.

This is probably a strange question, but did you memtest86 your memory already?

The only thing I can think of is to try the drives in a different system and see how they behave (same OS and configuration).

If they behave the same then that rules out everything except the drives themselves and the OS.

Considering how you mentioned the behavior is better in Windows, it sounds like a software issue, but you never know until you try.

The other way to look at it is to stick the drives into a usb enclosure. That gets you away from the PC's 3v3 rail. If you then hang the drive enclosure off of a powered hub/dock, you are definitely way outside of the PC's power supply problems.

Here's one that I have, hopefully it's still made halfway good. https://www.amazon.com/gp/product/B08G14NBCS/

Unfortunately I have no other system at hand at the moment that's able to accept nvme drives :( I could try using windows for a couple of days see whether the issue is really linux-related, but I am trying to avoid that lol

Maybe even a PCIe pass through to a VM could do the trick if you're desparate lol (with Linux living in a separate drive)

Orrrr maybe even try FreeBSD... (or mac OS, but eww gross don't test that)